If you’re doing SEO, then you probably know about Screaming Frog. Screaming Frog has long been the gold standard in website crawling for most sites.

But what happens when Screaming Frog isn’t enough for you any more? Are there alternatives to Screaming Frog that you should know about that may suit your needs better?

Absolutely, and that’s what I will cover today.

Before I go any further, I will say that any serious SEO should have a Screaming Frog subscription.

Screaming Frog is made by real SEOs and is a great tool to have at your disposal (the free version lets you crawl up to 500 URLs for free, but you don’t get crawl scheduling, JavaScript rendering, AMP crawling, and some other important features) when you need to do a quick crawl of your site.

And at just £149 GBP (~$180) for an annual license, we recommend that you buy it. It’s worth it.

First let me show you Screaming Frog as a tool, then I’ll go into the alternatives.

Table of Contents

What Screaming Frog is great for

I use Screaming Frog for a few major tasks and in a few specific circumstances.

I rarely use Screaming Frog for a full website crawl because I mostly am working on websites with six or seven figures of pages in the search index. In the past Screaming Frog had trouble with large sites, though in recent years they created a way to optimize it to crawl large sites using database storage. Once I tried this feature, I was able to crawl a 147,000 page website relatively quickly without rendering my machine useless.

This feature is extremely valuable, and when coupled with their other tools like XML sitemap building and various specific exports like Insecure Content (for checking HTTPS), canonical errors, and orphaned pages, Screaming Frog is a very real contender for the best crawler out there. I literally use it every day.

I use Screaming Frog for:

- Quick crawls of a small site

- Crawls of a section of a site

- Crawling lists of URLs to gather their meta information

- Building sitemaps from lists of URLs

- Understanding at a high level how well utilized the main meta elements are for pages and templates on sites.

Screaming Frog is great for the SEOs and marketers who need all the data and then want to manipulate it in Excel or another tool. This can be great for auditing and creating lists of URLs that need certain fixes or have issues.

Screaming Frog’s main drawbacks, IMO, are that it doesn’t scale to large sites and it only provides you the raw data. Screaming Frog is by SEOs for SEOs, and it works great in those circumstances. But if you are looking for graphs that you can present to your executive suite, Screaming Frog is not the tool for that.

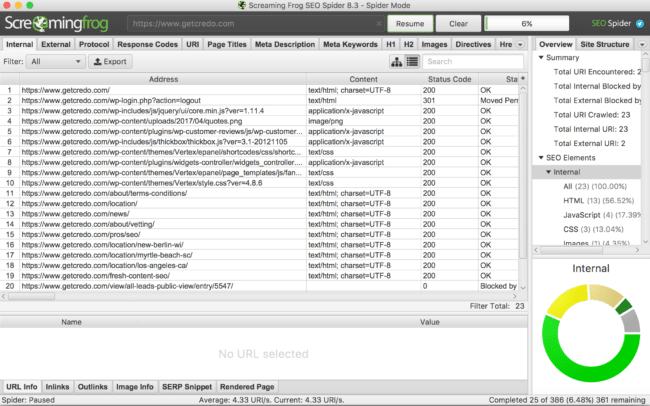

Here’s a screenshot of Screaming Frog’s UI at the beginning of a crawl:

As it goes deeper, you can do very useful things like:

- Identify your URLs returning non-200 status codes;

- Identify URLs that are not in sitemaps;

- Visualize at a basic level site depth (and the data is provided to visualize yourself);

- Find pages that are no-indexed that should not be.

Once again, Screaming Frog is a great tool and one that I use multiple times per week. In fact, it may be my most used SEO tool on a weekly basis.

But like I said, it’s not the best for everything.

So while any professional SEO or inhouse team doing SEO should have a Screaming Frog license, there are others to consider as well depending on your business.

Top Screaming Frog alternatives

For as many SEOs as there are out there on the internet, there are as many tools. SEOs can’t help but build tools, which is great because it pushes the industry forward.

As such, there are four crawlers that people tend to use alongside or instead of Screaming Frog. Please note that I am not making an attempt to cover all of the potentials out there. There are many others that do not have nearly the level of adoption of these tools, but if I were to list them all then the list would be forever long.

The true focused site-crawling alternatives we recommend are:

- DeepCrawl

- SiteBulb

DeepCrawl

When a site I am working on has outgrown the technical abilities of Screaming Frog, DeepCrawl is my tool of choice. If you sign up with this link (and use the code GETCREDO) then you can get 10% off as well.

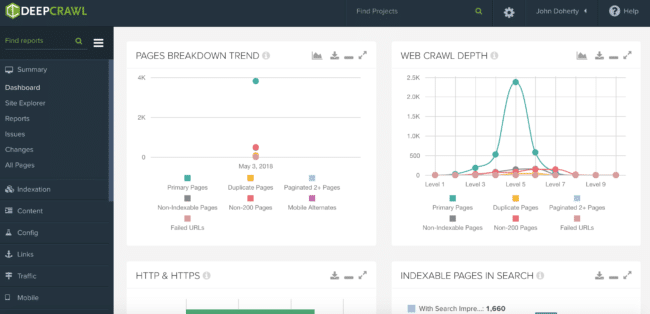

DeepCrawl is an enterprise SEO crawler. They are singularly focused at providing a fantastic cloud-based crawling platform that helps you keep on top of changes on your site that may adversely affect your SEO.

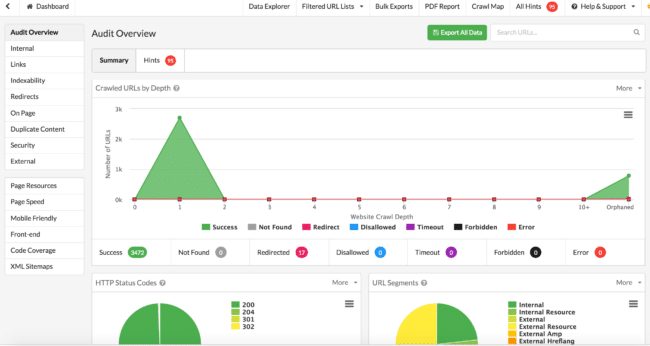

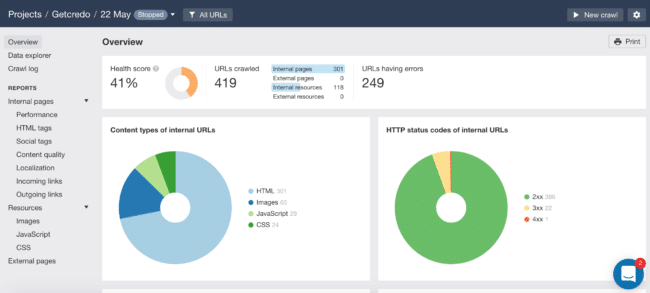

Here is their dashboard:

One of the things that many crawlers have yet DeepCrawl does well is provide an easy breakdown via the sidebar of common issues broken up by Indexation, Content, Links, and much more.

And like any decent crawler these days, you can connect up Search Console as well as Google Analytics so that you can prioritize fixes based off of impressions or past impressions (if you’ve lost visibility!)

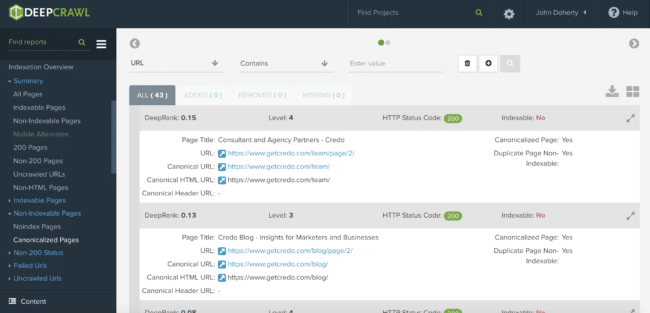

Here’s an example of an issue that DeepCrawl flagged up for Credo that I was unaware of, even though I crawl the site pretty often:

In this specific example, DeepCrawl showed me that I have an issue with pagination on my category pages where the second page (?page=2) links to the first with a parameter (?page=1) instead of directly to the first page in the series. This is a crawl budget issue that they helped me identify.

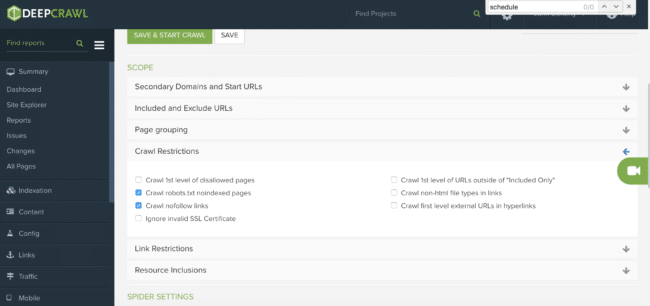

Crawl controls

As far as I can tell, DeepCrawl has all of the same crawl controls that the other crawlers have, such as:

- Ignoring robots.txt

- Crawling as specific search engine bots (to check for cloaking or indexing issues)

- Uploading sitemaps to crawl specifically to check for issues

They also have a “Stealth mode”, which limits the crawl to 1 URL every 3 seconds. If you have ever brought down a website with Screaming Frog by crawling it too hard (you know you have), then this can be a lifesaver for you.

Scheduling crawls

Finally, one of the things I love about DeepCrawl is the ability to schedule crawls. Many tools offer this (Moz’s campaigns do it automatically as well), but scheduling crawls so you can be proactive about your SEO and then coupling that with their insights can save you so much SEO time and pain.

DeepCrawl cost

DeepCrawl costs $89/mo at the low end and goes up to completely bespoke levels based on your needs. So they’re not cheap, but neither are issues on your site that literally cost your business money!

SiteBulb

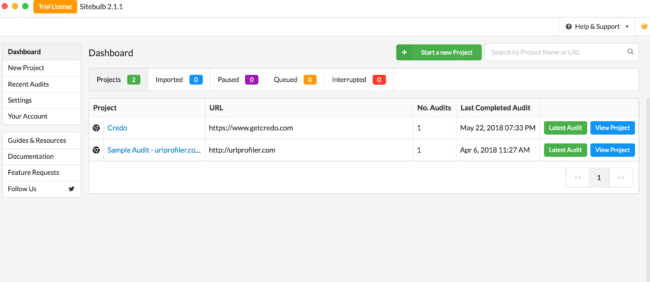

SiteBulb is a new player in the SEO crawler space. Developed by some SEOs out of the UK, many people over the last months have had very good things to say about it so I figured I’d take it for a spin and run a crawl.

Like Screaming Frog or Xenu, SiteBulb is a CPU-based tool which means it runs using your computer’s energy and not servers that they own/rent/use. So you’ll likely have the same challenges with SiteBulb as with Screaming Frog or Xenu when it comes to resource constraints. As I mentioned with Screaming Frog, some SEOs have opted to run it in the cloud using a virtual machine to save their own computer’s bandwidth for other tasks.

SiteBulb overview

To do this comparison, I downloaded SiteBulb and signed up for their free 14-day trial (which exists in May 2018 when this was written). Upon opening it, SiteBulb feels like Screaming Frog and DeepCrawl had a baby.

I loaded up a crawl and let it go. Weirdly SiteBulb crashed on me (I have a 2016 Macbook Pro) and so I had to reopen it, but it took me about 10 minutes to realize it had even closed. This can be an issue with Screaming Frog as well, so I would say this is a common issue with computer-based instead of cloud-based crawlers.

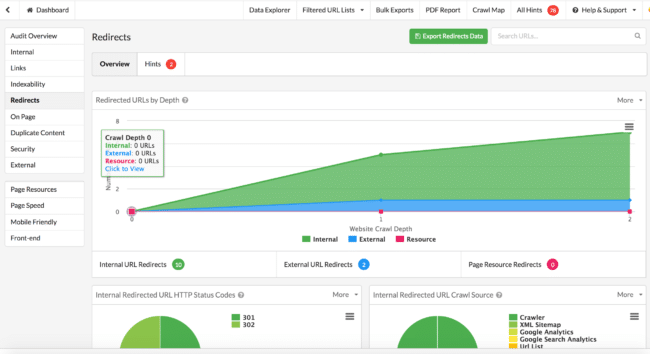

Update June 5: The SiteBulb team sent me a new version of the software that included a fix for the problem I ran into initially, which was that the software was picking up HubSpot and WordPress tags and that’s why it showed the initial crawl of everything at level 1. This update allowed me to do a full crawl and identify a lot of opportunities on my site, including many that I have already fixed onsite thanks to their recommendations.

It took me two tries to actually get a decent crawl of Credo. As I’ve said, I’ve crawled websites many times and know that every tool has a learning curve.

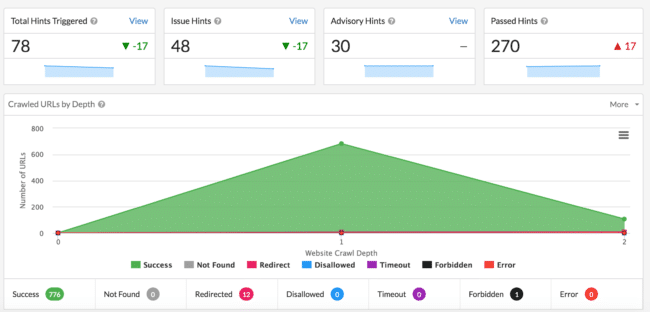

On the first crawl, I saw this dashboard with the completed crawl:

If you’ve been working as an SEO for a while or have run crawls with other sites, you’ll see from the start that this looks off. Compared with the DeepCrawl crawl, it’s completely different.

There is no way that a site like mine with a few thousand pages on it has all of the pages just one level below the homepage. I’m not sure what the issue is here, but the data as it stands here is wrong.

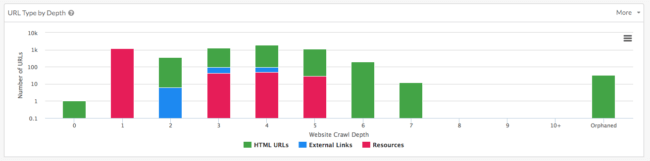

So I ran a second crawl by removing the initial settings to both crawl AND look at my sitemaps, and the crawl went much better (I stopped it early in the interest of time):

One thing I really appreciate about SiteBulb is that it’s no nonsense. They give you the data you need as an SEO with some graphs to help you understand how big of a problem something may be (all changes and recommendations for SEO on a site much be made in context to the other potential issues as well), and then they present you the data:

SiteBulb even helped me discover a few pages that should be behind a login wall but were not, thus saving me some potential privacy issues.

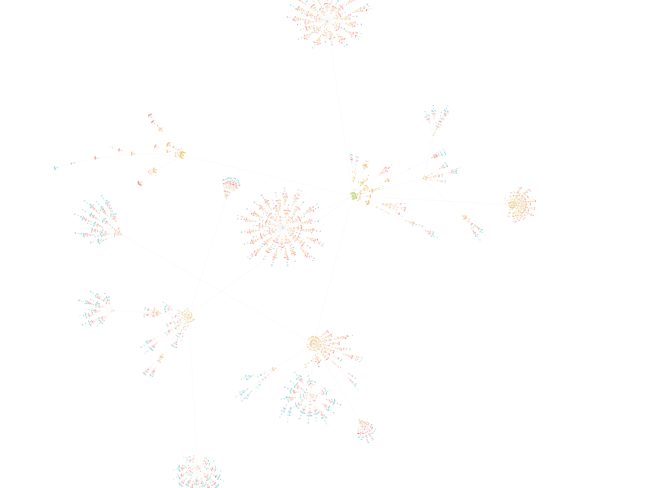

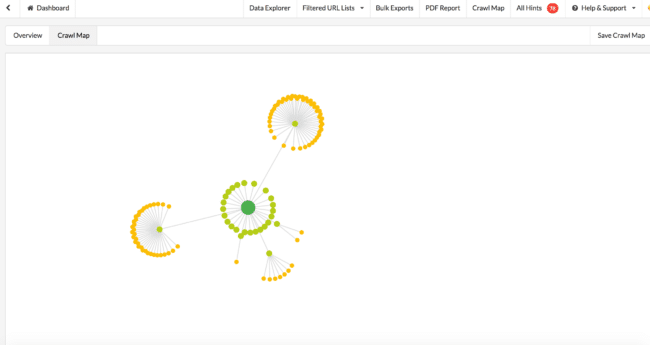

One of SiteBulb’s most celebrated features is their Crawl Maps, which I must be honest are super cool. This is only a partial crawl of my site, but this is already valuable information for me to know how well silo’d my content is:

This feature is amazing because previously it’s been very hard to get a good understanding of how your site is organized. You could do it using a combination of Gephi and Screaming Frog data, but having it all in one graph like this within the tool is a real bonus. Good work, SiteBulb.

Overall, SiteBulb is a big step up from Screaming Frog with better graphs and more accessible insight from the GUI, and the team over there is fantastic and listens to feedback and gets things done.

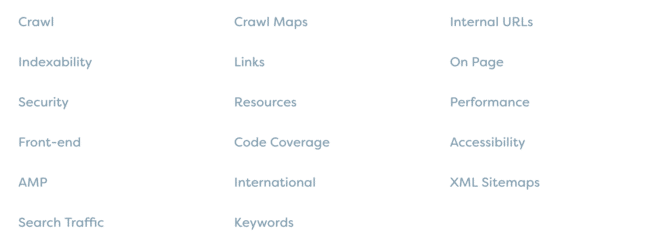

SiteBulb features

SiteBulb offers pretty much everything that one could imagine within an SEO site crawler. It is an SEO tool built for SEOs by SEOs, after all.

This is their list of features they have:

While this is a laundry list of features probably meant to convert on the front end, it does show you everything that SiteBulb is capable of. It’s a technical SEO tool that you can use to get both high-level graphs (to show to executives or flag up things to you as an SEO) as well as deeper straight data like you get from Screaming Frog.

SiteBulb price

SiteBulb currently (as of May 2018) is available for $35/mo with no minimum subscription required. They also have an initial 14 day free trial to let you take the tool for a spin.

SiteBulb is available for Mac and PC. Ubuntu/Chromebooks are not currently supported, which is fairly common for computer-based programs.

Note: Botify is another crawler that comes up time and time again against tools like DeepCrawl. I am a DeepCrawl user and not a Botify user. At some point I plan to get a Botify account and then can add Botify to this list of alternatives to Screaming Frog.

Special alternative mentions

I want to mention that many of the full-fledged digital marketing tool platforms out there have their own version of a site crawler.

The three that most businesses tend to use are:

- SEMrush

- Moz

- Ahrefs

SEMrush

If you’ve read Credo’s content before, you know I love SEMrush. It’s a full fledged digital marketing tool that I couldn’t do my work without, either on Credo or on client websites. I love them so much that you can get a 7 day free trial using this link.

Let’s be clear from the start that SEMrush provides a crawler as part of their subscription and within a campaign. SEMrush is not an on-demand crawler, so if you need that then use one of the dedicated crawlers above.

But if you want one tool that can do a lot of the auditing as well as crawl for you (albeit limited to a certain number of pages right now based on your SEMrush subscription level), read on.

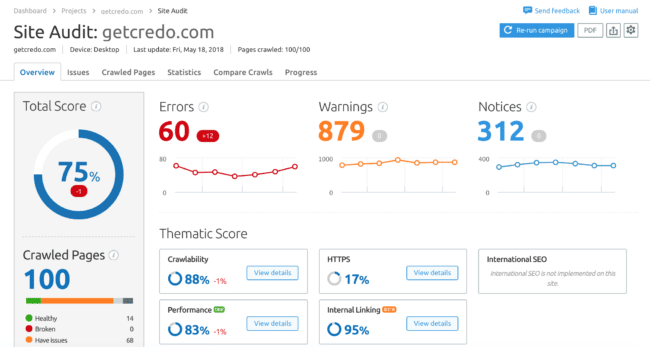

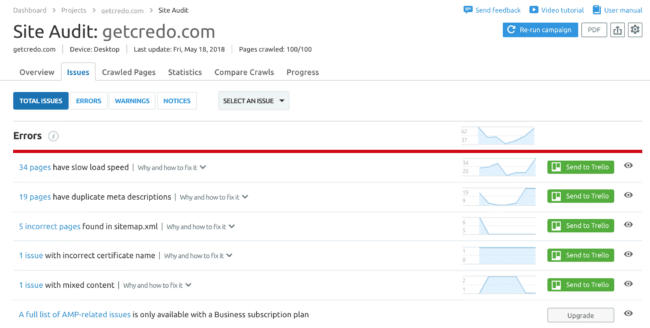

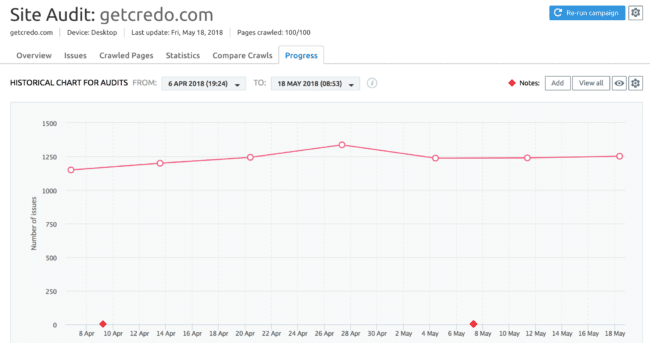

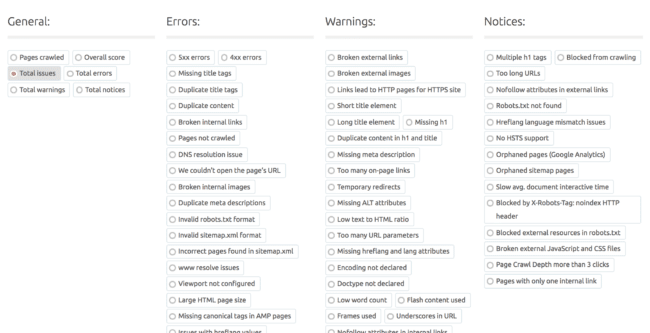

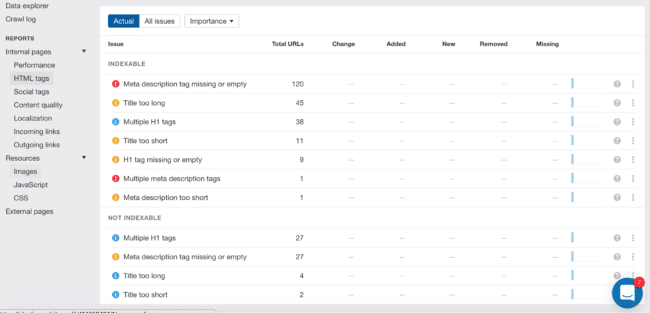

Within their campaigns, SEMrush has a Site Audit section for the site for which you have set up your campaign:

Under the Issues tab, they provide a high level view at the issues. This is actually a pretty useful view and I was able to get in and make some fixes on my site because of it:

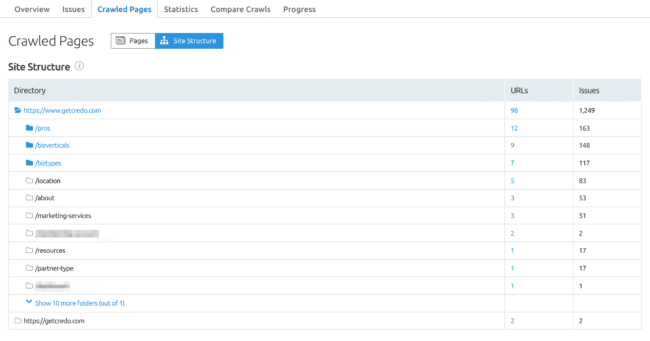

SEMrush also has a site structure feature which shows you a tree diagram of how your site is structured and issues by folder, which is really handy for prioritizing fixes:

Finally, SEMrush crawls your site every week, up to 100 pages on the level I am on (the bottom tier). They also have a Progress tab with an issues graph and a way to filter down to specific issues on the page:

This is why SEO is so complicated:

My final verdict here is that this site crawl by SEMrush, which is relatively new and constantly being upgraded, is useful in its current form and will likely only get better.

I do not like that it is limited by number of pages it will crawl, but if your site is less than 100 pages then I guess that is ok!

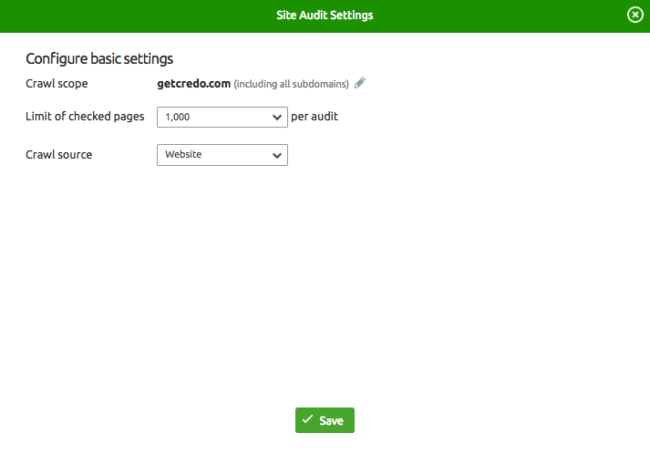

Edit: Conrad O’Connell pointed out that you can change the default number of pages to crawl. Navigate to your Audit then click the Settings wheel in the top right. You can change the number of pages crawled there:

My main gripe about SEMrush these days is that the site is pretty brutalistically designed. I’m looking forward to them eventually hopefully investing some of that recently raised VC money into a redesign to make the product as a whole more cohesive.

Check out SEMrush for a free 14 day trial

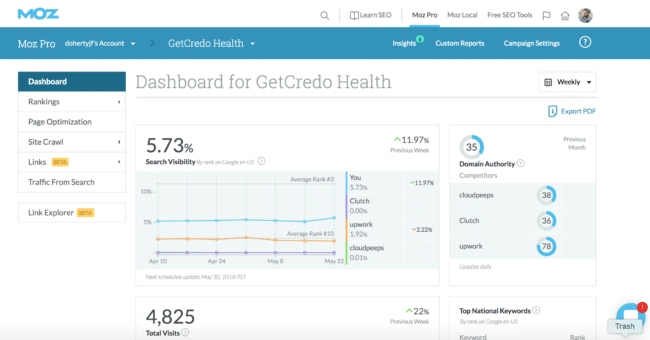

Moz Campaigns

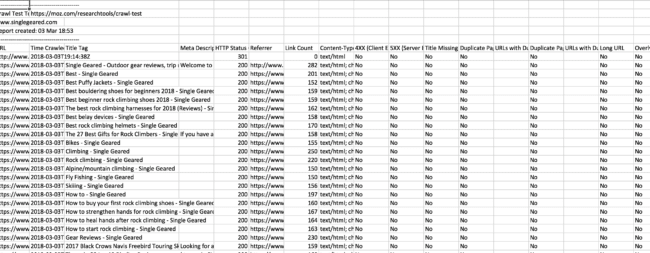

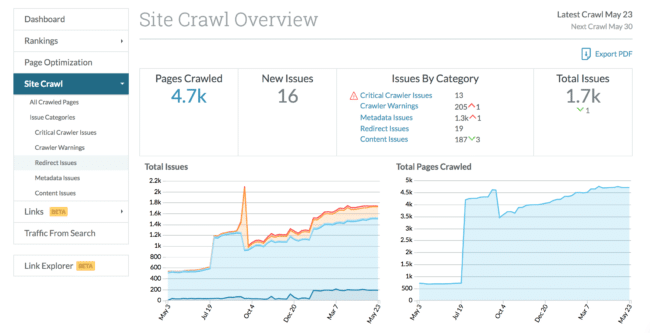

Moz’s main product, Pro, and its subsequent campaigns offers a weekly automated crawl that sends you reports on your site. Moz does also offer a Crawl Test tool, which with a Pro subscription you can use to run a few site crawls per day up to 3,000 pages. They say that you can schedule the crawl to happen every 24 hours or less often, but it is also unclear how this works. The page needs some UI work.

With the Crawl Test you just receive a CSV download with all of the raw data, which is basically what you get from Screaming Frog but without any UI. If you know what you’re doing, this data is valuable. Otherwise, it’s just a bunch of data rows.

Moz’s Pro Campaigns are another thing though. It’s a full fledged cloud-based tool that automatically crawls your site each week, updates your rankings, and flags up issues for you.

Here is a screenshot of the dashboard:

Diving deeper into the legend on the left side, you can view rankings for terms you are tracking, get the page optimization score for specific URLs (and track that over time), view your site crawl statistics, and more.

Here’s the site crawl dashboard:

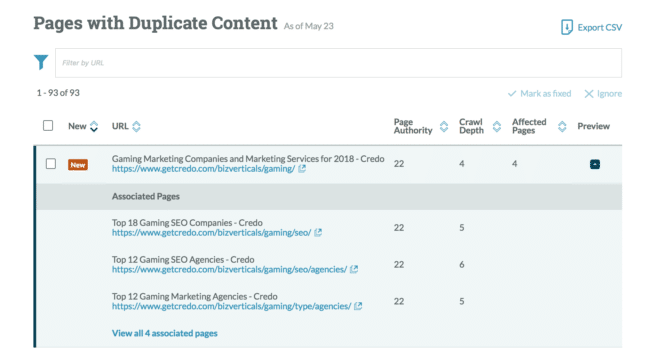

From there you can go deeper into specific issues, such as duplicate content:

One thing to note about Moz is that for specific things like duplicate content, they show near-duplicates as well as true duplicates. If you look at the URLs shown in the screenshot above, you will see that each of the pages targets a different term and also have slightly different data based on the filtering taking place. But Moz still shows them as duplicates.

Moz’s crawler within their campaign can be quite useful for the right sites, such as small and medium size business websites or SaaS sites. For marketplaces/directories like mine, it’s less useful unfortunately.

Ahrefs

Finally, Ahrefs. I’ve mentioned Ahrefs many times on this site and think that they are a great tool. I use them almost as often as SEMrush for backlink and keyword research, mostly because the tool’s data is still solid and their user experience is fantastic.

That said, they’ve recently rolled out a Site Crawl very similar to SEMrush and Moz’s. Because Ahrefs is a player in the digital/SEO data and insights space, it’s worth a look.

As of late May 2018 when this post was being written, the Site Audit still has a “New” tag on it within the top navigation so this leads me to believe that the tool is in its infancy. Honestly, it feels that way too:

Here is an overall screenshot of their crawl:

They have all of the basic SEO stuff you’d expect:

But that’s about it, to be honest.

They have sections for performance (load time) broken out by depth of page, which I guess is useful. They also tell you if pages don’t have social tags on them (all of mine do, so it’s just a green circle), “content quality” tells you about near-duplicate content just like Moz, localization tells you about HREFlang/international SEO tags if it applies to your site, incoming links can tell you when you have internal nofollowed links (which can be legitimate!), and outgoing links tells you if you’re linking out to places that have redirects.

I think the Ahrefs team is iterating on this, but I will not be using this feature of theirs in its current state.

What about Xenu Link Sleuth?

When I first started in SEO in 2010, Xenu Link Sleuth was the crawling tool of choice. After all, Moz Pro had just launched and Screaming Frog may not have even existed.

Back in 2010, a lot of SEO tools were very rough and had been built by practitioners. That means they did a great job, but they were often quite rough around the edges and hard to use.

It seems that Xenu has stayed this way, and I assume the founders just don’t need the money and moved on to more lucrative things. That coupled with the rise of Screaming Frog plus major toolsets like Semrush and Moz bringing cloud-based site crawls into their product portfolios meant that potential and existing Xenu customers got the same functionality elsewhere and for cheaper and bundled with a lot of other tools that they use day to day.

So what’s the verdict? What’s the best Screaming Frog alternative?

Out of the dedicated crawlers I have used, DeepCrawl and Screaming Frog are still my go-to’s. SiteBulb I need to use more, but I could maybe see myself phasing that in and possibly phasing out Screaming Frog. Though, Frog works well for me and the price is about 1/2 of SiteBulb and not another monthly charge which is nice.

For the full-fledged platforms, I think SEMrush is actually the best. That surprises me, but I find their insights to be higher quality than either Moz’s or Ahrefs’s in this case.

So there you go, all of the Screaming Frog alternatives that I’ve used and tested.